Adding Speech Input to Your Windows Phone App

In my earlier post I had guided you in implementing voice commands in your Windows Phone app and if you don’t have a clue about what I am talking, go through my earlier post here.

For those who doesn’t have time to go through the entire post, here’s a recap.

Recap

You can implement speech support in your own app very easily by following the steps given below.

1. Create a VCD file(Voice Command Dictionary), which essentially is a XML file containing the commands your app supports

2. Load created command file at the start up.

3 Deploy the app to the inbuilt emulator or to your device, hold the windows button until Speech dialog is displayed and speak the command you specified in your VCD file and Windows Phone will open the app for you.

As I mentioned earlier I had deployed a simple note taking application in the marketplace and along with this series of posts I am implementing speech recognition support in it.

In the last post I had already shown you to implement voice commands and now let’s get started with the coding for in-app speech to text integration.

Let's add a button in the application bar which will be used to initiate our speech recognition session

<phone:PhoneApplicationPage.ApplicationBar> <shell:ApplicationBar IsVisible="True" IsMenuEnabled="True"> <shell:ApplicationBarIconButton IconUri="/Images/appbar.back.png" Text="Back"

Click="ApplicationBarIconButtonBack_Click"/> <shell:ApplicationBarIconButton IconUri="/Images/appbar.save.png" Text="Save"

Click="ApplicationBarIconButtonSave_Click"/> <shell:ApplicationBarIconButton IconUri="/Images/appbar.microphone.png" Text="Talk"

x:Name="brBtnMic" Click="brBtnMic_Click"/> <shell:ApplicationBarIconButton IconUri="/Images/appbar.cancel.png" Text="Cancel"

Click="ApplicationBarIconButtonCancel_Click"/> shell:ApplicationBar> phone:PhoneApplicationPage.ApplicationBar>

In the xaml I had added a new button with an image of microphone and hooked a click event to it and now let’s see how the code is written for our speech input

First you need to add the following namespace to your code

using Windows.Phone.Speech.Recognition;

Now you need to create 2 objects of the following classes

private SpeechRecognizerUI FRecoWithUI;

private SpeechRecognitionUIResult FRecoResult;

SpeechRecognizerUI helps you to work with the default Graphical UI that is available in Windows Phone. In our case we will be using the default one because we are trying to implement a simple scenario.

If you want to build a custom UI, then you should use the SpeechRecognizer class to implement it.

SpeechRecognitionUIResult class is used is handle the results of the session initiated by the SpeechRecognizerUI class.

Now in the click event for the microphone button we will write the following code

private async void brBtnMic_Click(object sender, EventArgs e) { FRecoWithUI.Settings.ExampleText = "Please say the contents for your note"; FRecoResult = await FRecoWithUI.RecognizeWithUIAsync(); if (FRecoResult.ResultStatus == SpeechRecognitionUIStatus.Succeeded

&& FRecoResult.RecognitionResult != null

&& (int)FRecoResult.RecognitionResult.TextConfidence

>= (int)SpeechRecognitionConfidence.Medium) { if(String.IsNullOrWhiteSpace(tbkEntry.Text)) tbkEntry.Text = FRecoResult.RecognitionResult.Text; else tbkEntry.Text += FRecoResult.RecognitionResult.Text; } }

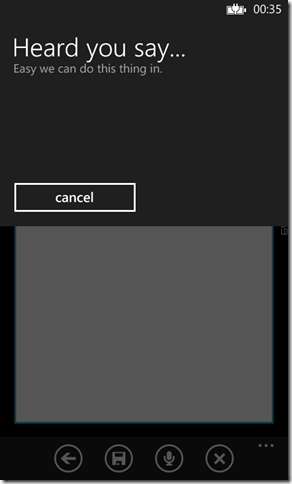

When I click the microphone button, the default UI will be displayed along with the example note mentioned in the first line as shown below

In the second line we are calling the RecognizeWithUIAsync method which is responsible for matching the every word we utter with the grammar set in speech recognizer.Since we didn’t added a grammar set it will match the words we spoke with the default set in Windows Phone. Also when I am done with speaking, the phone will provide the feedback by default and from their you can either continue with the recognized text or start all over again.

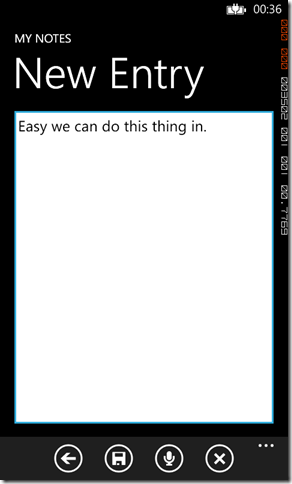

In the third line I checks whether the conversion was successful or not and also checks for the accuracy of the conversion and populates the text field in new entry page if and only if its much similar to the what I had spoken

So we have now successfully converted what we have uttered into text and by now you can see that it’s very simple to implement it in your app.

You can read more about it here.

Till next time, happy coding….

No Comments

Connecting Azure Blob Storage account using Managed Identity

Posted 12/9/2022Securing Azure KeyVault connections using Managed Identity

Posted 11/26/2022Manage application settings with Azure KeyVault

Posted 11/9/2022Adding Serilog to Azure Functions created using .NET 5

Posted 4/3/2021Learn how to split log data into different tables using Serilog in ASP.NET Core

Posted 4/23/2020